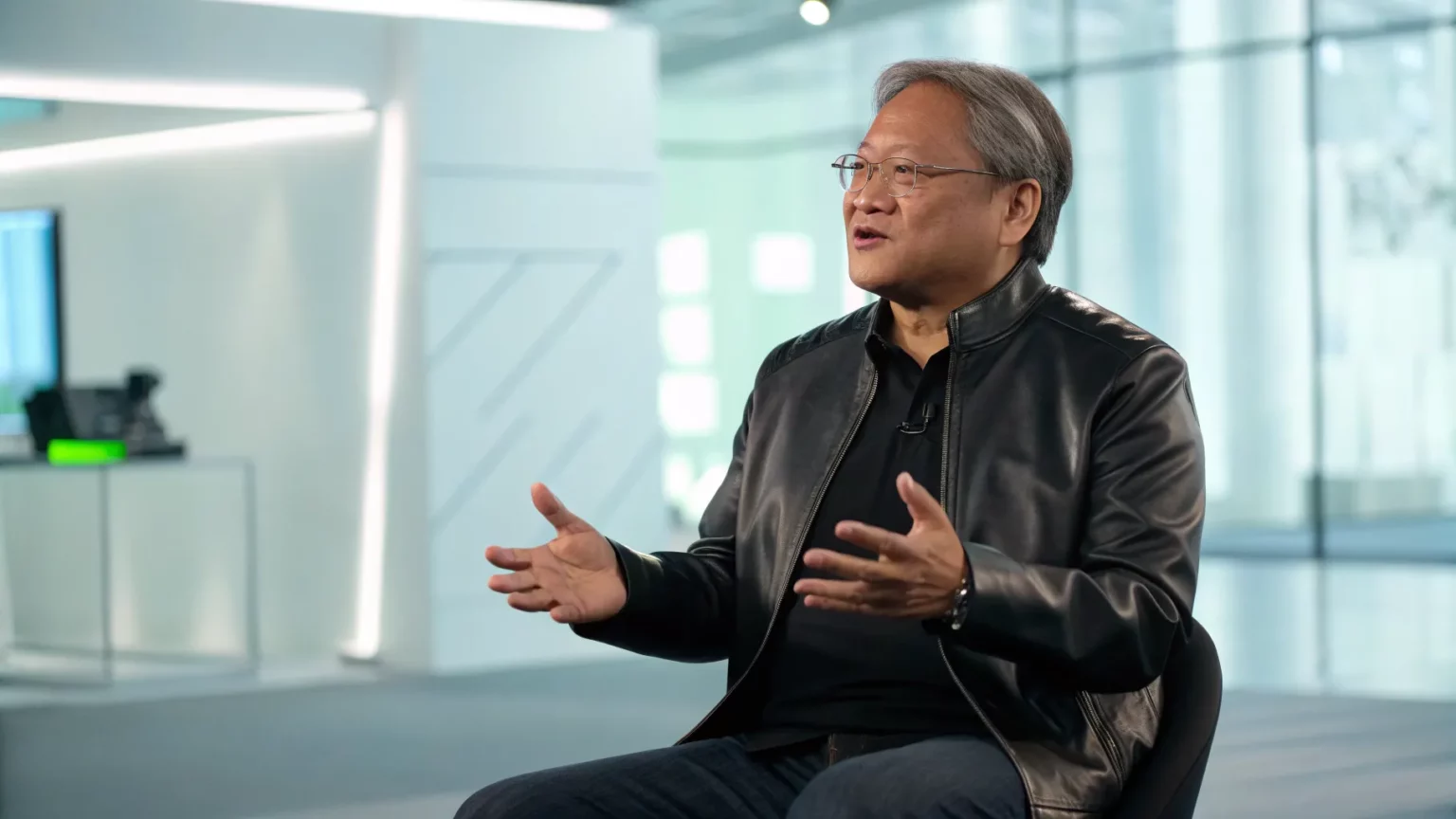

In a rare television appearance, NVIDIA CEO Jensen Huang outlined how artificial intelligence is reshaping business and daily life, speaking on Fox Business’ “The Claman Countdown.” The wide-ranging interview touched on the pace of adoption, the compute behind new AI tools, and why the technology matters for companies large and small.

Huang’s comments come as AI models spread from research labs into offices, homes, and factories. The conversation highlighted the race to build powerful systems, the supply chain needed to support them, and the policy questions hanging over the sector. The exchange offered a timely snapshot of where the industry stands and where it may head next.

Context: A Chipmaker at the Center of AI

NVIDIA sits at the heart of today’s AI build-out. Its graphics processing units, or GPUs, are widely used to train and run large models that power chatbots, search, and image tools. Cloud providers and startups rely on those chips to meet rising demand.

That demand has grown as organizations look to automate tasks, analyze data, and create content. Developers are rolling out new services at a rapid clip, while enterprises test AI assistants for customer support, software coding, and design work. This shift requires vast computing power and steady access to high-bandwidth memory and networking gear.

Huang’s appearance underscored NVIDIA’s profile in this trend. The company’s technology sits inside many of the data centers that enable modern AI. Investors watch its supply updates and product roadmap closely because they influence how quickly new AI services reach the market.

Key Themes From the Interview

“NVIDIA CEO Jensen Huang joins ‘The Claman Countdown’ to discuss the impact of artificial intelligence and more in a wide-ranging interview.”

While the conversation ranged across topics, several threads stood out:

- AI is moving from pilots to production across sectors.

- Compute, energy, and supply-chain planning remain central concerns.

- Policy debates over safety, transparency, and jobs are intensifying.

Huang has long argued that accelerated computing can lower costs for complex workloads. That idea is now being tested by enterprises that must balance performance with budgets and power limits. The outcome will affect who can deploy AI at scale and how fast.

Industry Impact and Supply Questions

Industry leaders face a delicate balance: build faster while securing chips, memory, and data center capacity. Cloud platforms continue to expand their AI infrastructure. At the same time, startups chase access to computing clusters to train custom models.

Analysts say supply remains tight for high-demand chips, even as manufacturers increase output. The situation can influence which companies launch features first and at what cost. It also shapes how AI workloads are distributed between public clouds and on-premises systems.

For enterprises, the focus has shifted from proofs of concept to measurable results. Leaders want tools that reduce customer wait times, speed up coding, and improve search quality. They also want clear ways to manage security and guard company data.

Societal Effects and Policy Debate

As AI spreads, its impact on jobs, education, and media grows. Companies are retraining workers to use AI tools, while schools weigh how to teach with and about the technology. Newsrooms and studios are testing AI for production tasks and grappling with rights and attribution.

Regulators are pressing for transparency, watermarking, and safety assessments. Businesses seek clear rules that let them ship new features without legal surprises. Huang’s remarks fit into a broader call for standards that reward responsible deployment while keeping competition open.

What to Watch Next

Several signals will shape the next phase:

- New chip generations and their efficiency gains.

- Progress on data center energy use and cooling.

- Adoption of smaller, task-specific models inside companies.

- Evolving rules on privacy, copyright, and AI safety.

Investors will monitor how quickly fresh capacity comes online. Developers will watch whether tools become easier to run on modest hardware. Policymakers will look for proof that safeguards work without stifling useful products.

Huang’s appearance delivered a clear message: AI is moving from promise to practice, supported by heavy infrastructure spending and rising interest from mainstream companies. The next chapter will be defined by access to compute, practical gains inside businesses, and the rules that guide how AI is built and used. As supply improves and standards take shape, the winners will be those who deliver reliable systems, prove value, and earn trust.